AI-generated content is transforming marketing, but it’s also creating compliance risks that traditional governance frameworks weren’t designed to handle.

- Bias, misinformation, and transparency gaps in AI-generated content expose organizations to regulatory penalties and reputational damage.

- The EU AI Act, updated GDPR enforcement, and emerging U.S. state regulations are creating a complex global compliance landscape.

- Content approval workflows in 2026 must evolve beyond manual review processes to keep pace with AI-driven content velocity.

- Compliance automation tools that combine AI-powered scanning with centralized asset management offer the most effective path forward.

Organizations that treat AI marketing compliance as a content operations challenge rather than a legal afterthought will build sustainable advantages.

The explosion of AI-generated marketing content has changed the compliance equation. What once required weeks of human review now happens in minutes, and marketing teams are producing content at volumes that would have seemed impossible just two years ago. Organizations are now managing an average of four AI-related risks, double from 2022, with regulatory compliance ranking among the top concerns.

This acceleration creates a paradox. The same AI capabilities that enable personalized content operations at scale also introduce risks that regulators worldwide are scrambling to address. Bias embedded in training data, hallucinated facts presented as truth, and opaque algorithmic decision-making all create liability for brands that fail to effectively govern their AI-generated content.

Your organization needs an AI marketing compliance strategy. The question is whether your current governance framework can handle what’s coming next.

What Risks Does AI-Generated Content Introduce?

Understanding the risks AI brings to marketing content is the first step toward building effective governance. These risks are documented issues that have already resulted in regulatory action, consumer backlash, and financial penalties for organizations that failed to anticipate them.

Bias and Algorithmic Discrimination

AI systems learn from the data they’re trained on, and that data often reflects historical biases that marketers would never intentionally perpetuate. When these biases surface in marketing content or personalization algorithms, the consequences extend beyond brand reputation into legal liability.

Personalization engines that exclude certain demographics from premium offers, image generation tools that default to stereotypical representations, and targeting algorithms that inadvertently discriminate based on protected characteristics all create compliance exposure. The challenge is that these biases often remain invisible until someone explicitly tests for them or a regulator comes knocking.

Organizations using AI for marketing personalization need systematic bias auditing processes. Proper auditing means testing outputs across demographic segments, documenting results, and maintaining records that demonstrate due diligence in identifying and correcting discriminatory patterns.

Misinformation and Hallucinations

Large language models have a well-documented tendency to generate confident-sounding content that is factually incorrect. In marketing contexts, the risks range from minor embarrassment to serious regulatory violations, particularly in industries where accuracy claims are legally scrutinized.

A healthcare company that publishes AI-generated content containing inaccurate efficacy claims faces FDA enforcement action. A financial services firm that allows hallucinated statistics into marketing materials risks SEC penalties. Even in less regulated industries, consumers who feel misled by AI-generated content can damage brand trust in ways that take years to rebuild.

The solution requires human review layers specifically designed to catch factual errors, combined with content governance frameworks that flag AI-generated content for enhanced scrutiny before publication.

Transparency and Explainability Gaps

Modern AI systems often function as black boxes, making decisions through processes that even their creators struggle to explain. When these systems influence which consumers see which marketing messages, regulators want to know how and why those decisions were made.

GDPR’s right to explanation provisions already require organizations to explain automated decisions that affect individuals. Similar requirements are emerging in U.S. state privacy laws and industry-specific regulations. Marketing teams that can’t explain why their AI showed a particular ad to a particular consumer face growing compliance exposure.

Building explainability into AI marketing systems requires selecting tools that provide decision audit trails, documenting the logic behind personalization rules, and maintaining records that can satisfy regulatory inquiries.

How Are Global Regulations Reshaping AI Marketing Compliance?

Regulations for AI marketing compliance are evolving faster than most organizations realize. What seemed like distant future requirements just 18 months ago are now enforceable obligations with significant penalties attached.

The EU AI Act: A New Compliance Standard

The EU AI Act is the world’s first comprehensive legal framework for artificial intelligence regulation. Its requirements began taking effect in February 2025, with transparency obligations for general-purpose AI models effective as of August 2025.

For marketing teams, the most immediate implications involve transparency. AI-generated content must be clearly labeled so consumers know when they’re interacting with machine-created material. Chatbots and virtual assistants must disclose their AI nature at the start of interactions. Deepfakes and synthetically altered media require explicit identification.

Penalties for non-compliance are substantial. Fines can reach €35 million or 7% of global annual turnover for the most serious violations. Even limited-risk AI systems used in marketing face transparency obligations that many organizations haven’t yet implemented.

GDPR and CCPA: Evolving Privacy Obligations

While GDPR and CCPA weren’t written specifically for AI, their requirements take on new meaning when applied to AI-driven marketing. Consent management is more complex when AI systems process personal data. The right to explanation creates documentation burdens for automated marketing decisions.

GDPR enforcement has intensified. Cumulative fines have surpassed €5.6 billion, with individual penalties reaching into the hundreds of millions for major violations. AI marketing compliance failures increasingly appear in enforcement actions, particularly around consent and transparency requirements.

CCPA and its expanding cohort of U.S. state privacy laws create additional obligations around consumer rights to know how their data informs AI-driven marketing decisions. Organizations operating nationally must navigate a patchwork of state requirements that continues to grow more complex.

Emerging U.S. Frameworks and Industry-Specific Rules

U.S. regulators are actively targeting AI marketing practices through existing enforcement authority. The FTC has made AI-washing a priority, pursuing companies that overstate their AI capabilities or use AI in deceptive ways.

Industry-specific requirements add additional layers. Financial services firms must navigate FINRA advertising rules that apply to AI-generated content. Life sciences companies face FDA regulations around promotional materials that become more complex when AI is involved. Organizations in regulated industries need compliance workflows that integrate marketing production with regulatory review processes.

Regulators worldwide are moving toward greater AI transparency, accountability, and consumer protection requirements. Organizations that build compliance capabilities now will be better positioned than those scrambling to catch up after enforcement actions begin.

What Does Effective Content Governance Look Like in 2026?

Traditional content governance frameworks assumed human creation and review at every stage. AI-generated content breaks those assumptions, requiring new approaches that balance speed with control and automation with accountability.

Centralized Asset Control and Version Management

When AI can generate hundreds of content variations in the time it once took to create one, tracking what exists and where it came from becomes exponentially more difficult. Organizations need centralized repositories that serve as the single source of truth for all content assets, whether human-created or AI-generated.

Centralization enables the audit trails that regulators expect. Every piece of content should have documented provenance, showing when it was created, by what process, who reviewed it, and what approvals it received. When questions arise about specific marketing materials, organizations need the ability to reconstruct the complete history quickly and accurately.

Version control is vital when AI enables rapid iteration. Without systematic tracking, organizations lose visibility into which versions were published, which were approved, and which might contain compliance issues that require remediation. Modern digital asset management approaches address these challenges through automated tracking that captures version history without requiring manual documentation.

Automated Content Approval Workflows

Manual review processes that worked for traditional content volumes collapse under the weight of AI-generated content. Content approval workflows in 2026 must incorporate intelligent automation that handles routine compliance checks while routing exceptions to human reviewers.

Effective workflows combine AI-powered scanning with structured approval chains. Initial automated review flags potential compliance issues before human reviewers see the content. Routing logic ensures the right stakeholders review appropriate content types. Escalation paths handle edge cases that require additional expertise.

The goal is to focus human attention where it matters most while automating the mechanical aspects of review. This approach allows organizations to maintain compliance standards even as content volumes scale.

How Can Compliance Automation Tools Transform Your Workflow?

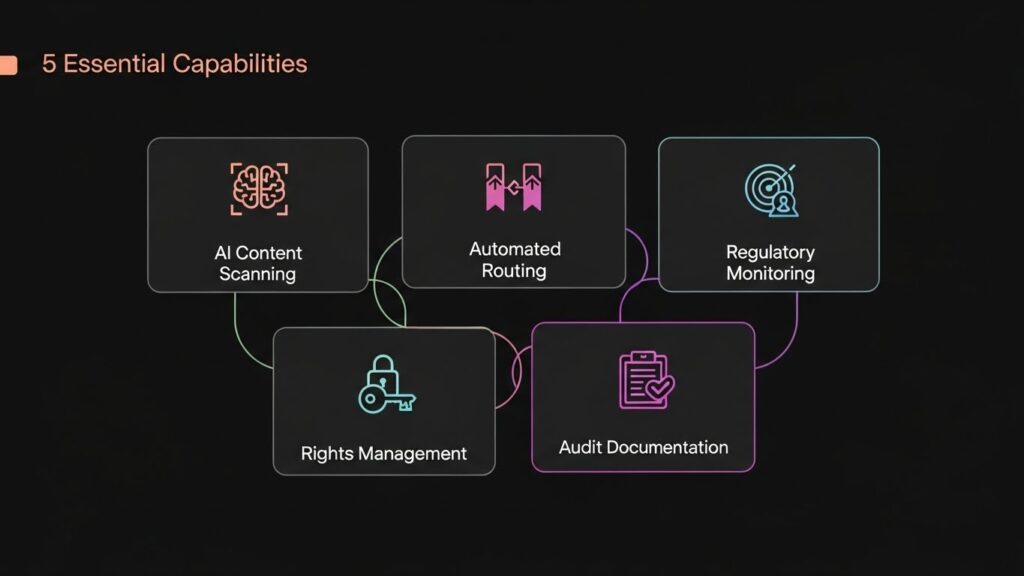

The right compliance automation tools can reduce the burden of AI marketing compliance while actually improving outcomes. Here are five capabilities that distinguish effective solutions from those that simply add another layer of complexity.

- AI-Powered Content Scanning: Automated analysis that evaluates content against regulatory requirements, brand guidelines, and compliance rules before publication. The best systems learn from feedback, improving accuracy over time as they process more content.

- Automated Approval Routing: Intelligent workflow management that directs content to appropriate reviewers based on content type, risk level, and stakeholder availability. This routing eliminates the email chains and spreadsheet tracking that slow traditional review processes.

- Real-Time Regulatory Monitoring: Continuous tracking of regulatory changes that automatically updates compliance rules and alerts teams to new requirements affecting their content. This capability is essential given the pace of regulatory evolution.

- Digital Rights Management: Systematic tracking of usage rights, consent status, and expiration dates across all content assets. When AI-generated content incorporates elements with specific usage restrictions, maintaining compliance requires automated monitoring.

- Audit-Ready Documentation: Automatic generation and retention of compliance records that satisfy regulatory requirements. When auditors or regulators request evidence of compliance processes, organizations need the ability to produce comprehensive documentation without manual reconstruction.

What Role Does Rights Management Play in AI Compliance?

AI-generated content introduces novel rights management challenges that traditional approaches weren’t designed to address. When an AI system creates an image, who owns it? When AI-generated text incorporates patterns learned from copyrighted sources, what obligations arise? These questions don’t have settled answers, making proactive rights management essential.

Organizations need systems that can detect and label AI-generated content throughout its lifecycle. This identification supports both regulatory transparency requirements and internal governance needs. When questions arise about specific assets, teams need immediate visibility into whether content was AI-generated and what review processes it underwent.

Consent management extends to AI training data as well as marketing personalization. Organizations using customer data to train or fine-tune AI models need documented consent for those uses. When AI-generated content is personalized based on individual data, consent requirements apply to those decisions as well.

Expiration and renewal tracking prevents compliance failures that occur when content remains in circulation after rights expire. Tracking is particularly important for AI-generated content that may incorporate elements with varying rights status. Automated monitoring ensures nothing falls through the cracks as content libraries grow.

How Should Organizations Prepare for the Future of AI Marketing Compliance?

Building sustainable AI marketing compliance requires investment across technology, process, and culture. Organizations that treat this as a one-time project rather than an ongoing capability will find themselves perpetually catching up to regulatory requirements.

Start by establishing governance frameworks that define how AI will be used in marketing, what oversight applies, and how compliance will be maintained. These frameworks should be living documents that evolve as regulations change and organizational AI use matures.

Technology investment should prioritize platforms that integrate content operations with compliance capabilities. Point solutions that address individual compliance requirements create integration challenges and visibility gaps. Unified platforms that manage content from creation through distribution provide the comprehensive control that effective compliance requires.

Training and culture matter as much as technology. Marketing teams need to understand the compliance implications of the AI tools they use daily. Compliance teams need sufficient AI literacy to effectively evaluate risks. Building this shared understanding takes ongoing investment in education and cross-functional collaboration.

The organizations that thrive in this new environment view AI marketing compliance as a strategic capability rather than a regulatory burden. Done well, effective governance enables confident AI adoption that drives competitive advantage. Done poorly, it creates ongoing liability that constrains innovation and exposes organizations to escalating risks.

Frequently Asked Questions

What is AI marketing compliance? AI marketing compliance encompasses the practices, processes, and technologies organizations use to ensure their AI-generated marketing content meets regulatory requirements, industry standards, and internal governance policies. This includes transparency about AI use, bias prevention, accuracy verification, and documentation of AI-driven marketing decisions.

What regulations apply to AI-generated marketing content? Key regulations include the EU AI Act, which requires transparency and labeling for AI-generated content; GDPR and U.S. state privacy laws, which govern data use in AI personalization; and industry-specific rules like FINRA for financial services and FDA requirements for life sciences. FTC enforcement also targets deceptive AI marketing practices.

How do content approval workflows help with compliance? Content approval workflows in 2026 automate routine compliance checks while ensuring appropriate human review for higher risk content. They create audit trails documenting who approved what and when, route content to qualified reviewers based on content type and risk level, and prevent non-compliant content from reaching publication.

What features should compliance automation tools include? Essential features include AI-powered content scanning against compliance rules, automated approval routing with escalation paths, real-time regulatory monitoring, digital rights management, version control with complete audit trails, and documentation capabilities that support regulatory inquiries and audits.

Building Your AI Marketing Compliance Foundation

Accelerating AI adoption and intensifying regulatory scrutiny make 2026 a pivotal moment for marketing organizations. Those who invest in robust compliance capabilities now will be positioned to leverage AI confidently while competitors struggle with governance gaps.

Effective compliance requires centralized content control, automated workflows, and systematic rights management working together as an integrated system. Aprimo’s AI-powered content operations platform delivers these capabilities through intelligent automation that maintains compliance without sacrificing speed. Request a demo and discover how leading brands are governing AI-generated content at scale.