Automating Brand Compliance for Global Digital Asset Management

AI brand governance and compliance provides enterprises with a governed way to automate the review, enrichment, and distribution of digital assets. Unlike basic cloud file sharing, an AI-powered asset management system combines persistent metadata, automated compliance checks, role-based security, and digital rights management.

As the volume of content required for omnichannel strategies grows, manual gatekeeping becomes a liability that introduces risk and slows production. Establishing a system of action where AI agents manage routine compliance tasks allows creative teams to focus on high-value work while ensuring every published asset remains within brand and legal boundaries.

TL;DR

Enterprises can use AI to automate brand governance by deploying specialized agents for metadata enrichment and regulatory checks. This approach balances speed with safety by maintaining human-in-the-loop oversight and persistent monitoring across all distribution channels.

How Do You Use AI for Brand Governance and Compliance

Enterprise brand governance serves as more than just a consistency check; it ensures that every digital asset aligns with legal, regional, and ethical standards before it reaches a customer. Traditional brand management often relies on manual review cycles where creative and legal teams verify logos, color palettes, and usage rights through a series of spreadsheets and email chains. This human-centric model is increasingly strained as the volume of content required for global, omnichannel campaigns continues to grow exponentially. When governance is treated as a final gate rather than a continuous thread, organizations face significant bottlenecks that delay time to market and increase the likelihood of human error.

Automating these checks requires moving beyond standard folder structures toward a dedicated system of action. For high-stakes industries like healthcare, finance, or global manufacturing, a baseline level of file management is insufficient. These organizations require a framework that can objectively evaluate an asset against a set of predefined rules, such as identifying if a required safety disclaimer is missing or if an image of a person is being used beyond its licensed regional territory. Platforms like Aprimo address this by embedding AI-powered compliance agents directly into the workflow, allowing for automated, objective, rule-based checks that uphold standards like GDPR, FINRA, and the EU AI Act. This transition from manual oversight to automated governance ensures that policies are enforced precisely at the point of content use.

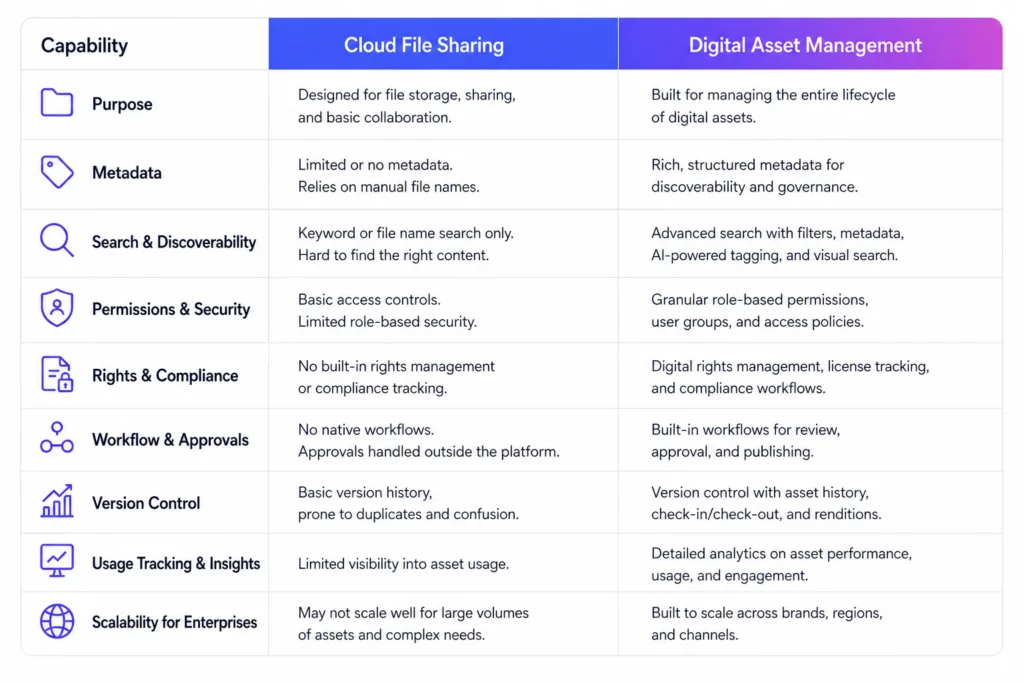

Cloud File Sharing vs Digital Asset Management: What Is the Difference

While both cloud file sharing and enterprise digital asset management provide a space to store content, they operate with fundamentally different philosophies regarding compliance. Basic cloud file sharing is designed for collaborative storage and the simple exchange of files; it excels at accessibility but provides little in the way of structured metadata or proactive oversight. In these systems, governance is typically externalized, meaning teams must manually check for rights and brand alignment before uploading or downloading a file. This creates a ‘dumping ground’ effect where assets become undiscoverable and risk profiles remain high.

In contrast, enterprise systems built for brand governance treat metadata as a foundational requirement for security and discoverability. These platforms utilize advanced capabilities like role-based permissions, digital rights management, and automated audit trails to ensure that only approved assets are accessible for specific use cases. Unlike a standard storage solution that lacks native personalization or brand-checking capabilities, a dedicated DAM provides an integrated environment where metadata enrichment, AI-powered discovery, and regulatory validation work in tandem. By centralizing these functions, enterprises can maintain a single source of truth that mitigates the risk of using expired, off-brand, or non-compliant content across their various digital channels.

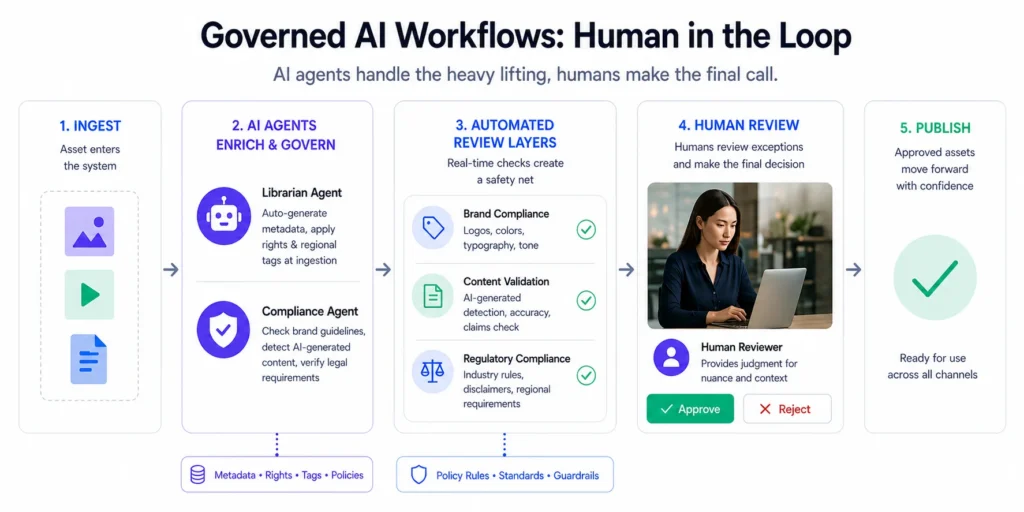

How Can Enterprises Implement Governed AI Workflows

To use AI safely, organizations must implement a human-in-the-loop oversight model where automation handles the heavy lifting of evaluation while humans remain the final decision-makers for subjective nuances. One approach involves deploying specialized agents that focus on specific aspects of the content lifecycle. For instance, librarian agents can automate the creation and enrichment of metadata at the point of ingestion, ensuring that every asset is born with the necessary rights and regional tags. This ensures that assets are findable and governed from the moment they enter the ecosystem, rather than being retroactively tagged.

Strategic implementation also requires automated review layers that act as a safety net for creative production. Compliance agents can be trained to recognize specific brand assets, detect AI-generated content for transparency, or verify that text-based assets adhere to industry-specific legal requirements. Aprimo helps enterprises move beyond basic cloud storage by combining secure digital asset management, metadata governance, rights management, and automated compliance agents to ensure brand standards are met without slowing down creative velocity. By establishing these automated feedback loops, teams can identify creative irregularities or compliance gaps in real-time, reducing the reliance on downstream manual reviews that often delay campaign launches.

Why Is Persistent Compliance Monitoring Essential for Global Brands

Risk mitigation in modern marketing requires active monitoring of assets even after they leave the central repository. Traditional governance often stops once a file is downloaded, leaving a blind spot as content is published to CMS, PIM, and social media channels. A governed approach ensures that policies and usage rights are extended to every point of consumption. This is particularly critical when managing thousands of assets across global brand portfolios where an expired license on a single webpage could lead to significant financial and legal consequences.

By leveraging AI to persistently scan downstream systems and channels, organizations can detect out-of-compliance usage, such as an image being used after its expiration date or in a restricted region. This capability transforms the DAM from a static library into a dynamic hub for content intelligence. Platforms like Aprimo provide comprehensive visibility and an audit trail, detailing who or what used content, when, and why. This level of oversight, combined with AI-powered content discovery that is significantly faster than traditional keyword searches, allows enterprises to maintain a robust security posture while facilitating the rapid delivery of personalized experiences across all customer touchpoints.

Conclusion

Securing a global brand against compliance risks requires more than just a modern repository; it requires a shift toward automated, governed workflows that protect the organization without stifling creativity. By integrating AI agents that handle metadata enrichment, regulatory validation, and persistent monitoring, enterprises can ensure that every asset serves the brand rather than exposing it to risk. This creates a high-performance environment where robust security and seamless accessibility coexist, allowing marketing teams to scale their content operations with confidence. Explore how a system of action can provide the strong governance and seamless accessibility your global portfolio requires.

FAQ

What is AI brand governance?

AI brand governance uses specialized algorithms and agents to automatically verify that digital assets meet brand guidelines, legal requirements, and regional regulations, reducing the need for manual human review of every file.

How does human-in-the-loop oversight work?

A human-in-the-loop model ensures that while AI automates the objective checks for compliance and metadata, human teams maintain oversight of the final creative and strategic decisions, ensuring authenticity and brand nuance.

What are compliance agents in a DAM system?

Compliance agents are automated tools within a DAM that perform rule-based checks to ensure content adheres to standards like GDPR, accessibility laws, or specific industry regulations such as FINRA and FDA.

How does AI help with asset findability?

AI improves findability by automatically generating descriptive metadata, alt text, and brand-specific tags, allowing users to find assets based on visual or textual content rather than relying on manual file naming.

Why is persistent monitoring important for digital assets?

Persistent monitoring involves continuously scanning connected systems like a CMS or social platforms to ensure that assets being used in-market are still within their licensed terms and meet current brand standards.